Elasticsearch cardinaliry Approximate calculation base HyperLogLog++

preface

We have many business scenarios that use de counting. In order to do this better, we need to have a deep understanding of the tools used. Cardinality aggregation is a single value metric aggregation method provided by Elasticsearch to calculate the approximate count of non repeated values. The calculation result is approximate because the algorithm based on HyperLogLog++is used, so you only need to understand the HyperLogLog++algorithm in depth.

Algorithm principle

The HyperLogLog++algorithm is an algorithm used to approximate the cardinality problem (that is, to calculate the number of different elements in a set). It is an improved version of the HyperLogLog algorithm. The main characteristics of this algorithm are higher accuracy and faster calculation speed.

The HyperLogLog++algorithm is based on the following two main ideas:

- Random hash function: map each element to a random hash value, and use the prefix of the hash value as the bucket number;

- Counter: assign a counter to each bucket to record the number of elements in the bucket.

Algorithm performance

To calculate an accurate count, you need to import the value into the hash set and return the size of the set. When processing high cardinality sets and/or large values, it cannot be expanded, because the required memory usage and the need to transfer each shard set between nodes will consume too much cluster resources.

Cardinal aggregation is based on the HyperLogLog++algorithm, which counts based on numerical hashes. The algorithm has the following characteristics:

- Configurable precision determines how to sacrifice memory for precision;

- The accuracy of low cardinality sets is very high;

- Fixed memory usage: regardless of whether there are billions or billions of unique values in the collection, memory usage only depends on the accuracy of configuration.

Assuming the accuracy threshold is c, the implementation method we use requires about c * 8 bytes of memory.

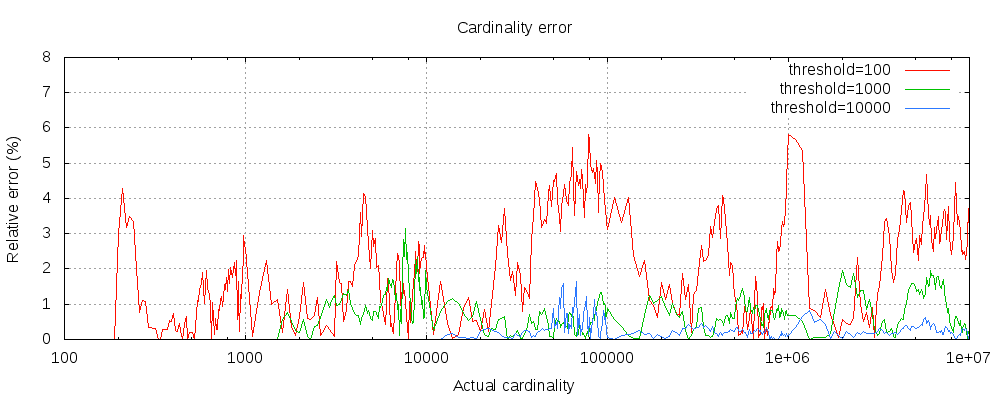

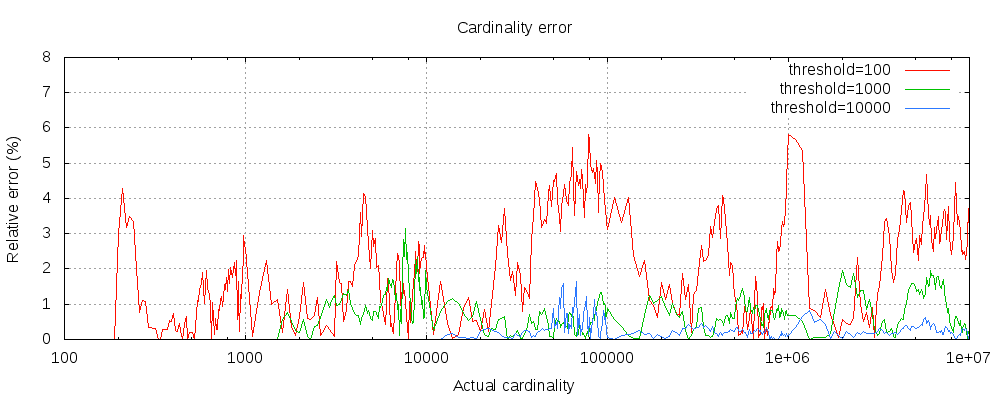

The following chart shows the error variation above and below the precision threshold:

For the three thresholds in the figure, the cardinality count before the configured threshold is accurate. Although there are errors, they are very close to the true value. In fact, accuracy depends on the data set. Generally speaking, most data sets show good accuracy. We also need to note that even if the threshold is as low as 100, the error is very low when counting millions of data (1-6% error is shown in the figure).

The HyperLogLog++algorithm depends on the number of pre order zeros of hashed values, and the accurate distribution of hashed data sets will also affect the accuracy of cardinality aggregation.

ES source code

Project address: https://github.com/elastic/elasticsearch

Code location:

Source code of third-party library

Mainly Golang:

matters needing attention

Cardinality aggregation uses an approximation algorithm. precision_threshold determines the precision of the value.

- The greater the precision_threshold configuration is, the higher the precision is, and the result is closer to the true de duplication value;

- The larger the precision_threshold configuration is, the more resources will be occupied;

- The official default value of precision_threshold threshold is 3000, and the maximum configuration value is 40000;

- If you need to calculate the de duplication result of a very large base, you can use the pre hash calculation method to optimize. The corresponding plug-in is mapper-murmur3 , murmur3 is an algorithm for taking hash values.

The overhead of cardinality aggregation on memory can be calculated using the following method:

If the precision_threshold is 10000, the memory cost is 10000 * 8/1024, which is about 80KB.

In general, it is sufficient to use the default value, which is obtained by combining precision and memory overhead.

However, some business scenarios require high accuracy, so the threshold can only be raised. The method of height adjustment is generally close to the average value of the observed value. For example, when using the default value, it is observed that the average de duplication value is about 10000, then we can try to adjust the threshold value to 10000. The side effect is that it may not support high-frequency requests, because it will lead to greater memory overhead, which will crowd out other resources, resulting in long GC, affecting the work of other threads and causing service exceptions.

link

This article is written by Chakhsu Lau Creation, adoption Knowledge Sharing Attribution 4.0 International License Agreement.

All articles on this website are original or translated by this website, except for the reprint/source. Please sign your name before reprinting.