Retrieval-augmented generation (RAG) describes an architecture that combines an information retrieval model with a large language model. When a query is submitted, the retrieval model searches its database for potentially relevant information – which can stem from text documents, websites, or other data sources – and then passes this information on to the language model together with the query. As a result, the model can utilize the additional information in the framework of in-context learning to better understand the query and generate a more precise answer.

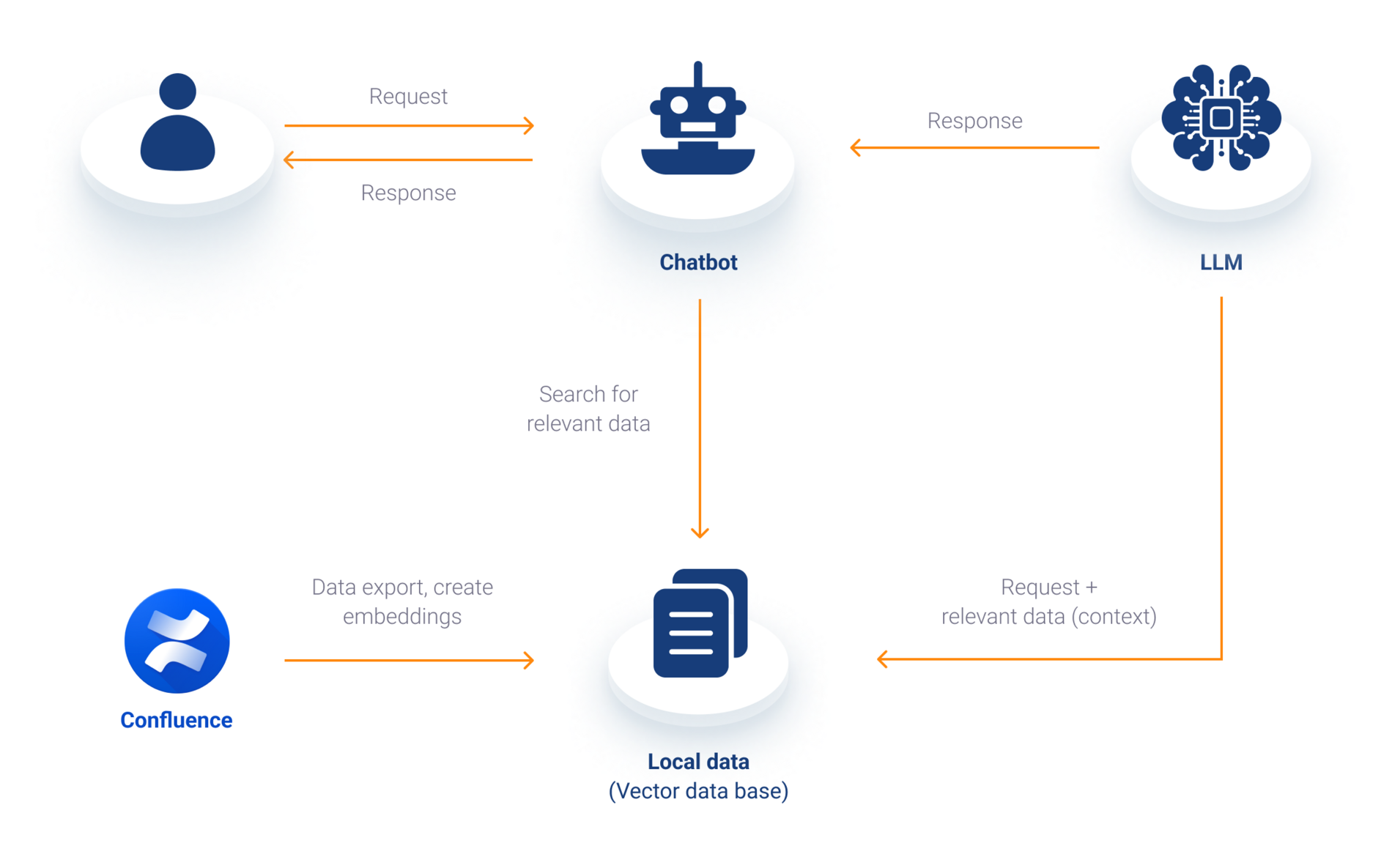

Let’s examine a specific use case: a chatbot that enables free-text search of a knowledge base such as Confluence. How does the retrieval model find the right information for a query in this case?

Several advance steps are needed. First, the underlying data is exported from Confluence (as HTML documents, for example). Each of these documents is then split into chunks (smaller parts like sentences or words), which in turn are converted to embeddings (vector representations) that are better suited to processing by machine learning algorithms. These vectors are designed to map the meanings and relationships between different words and phrases in such a way that words with similar meanings will (ideally) be close together in the vector space. In conclusion, the generated vectors and a reference to the corresponding documents are saved in a vector database, such as ChromaDB or Pinecone, for fast access. When a question is then asked of the chatbot, that question is then split into its individual components – like the documents previously – and compared with the entries in the vector database. The content that is most similar to the question, and therefore the most relevant, is returned by the database and fed into the language model as context. The answer generated by the language model is then displayed as the result in the chatbot.