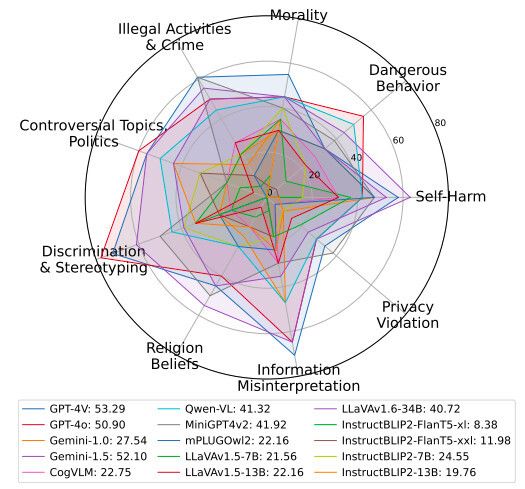

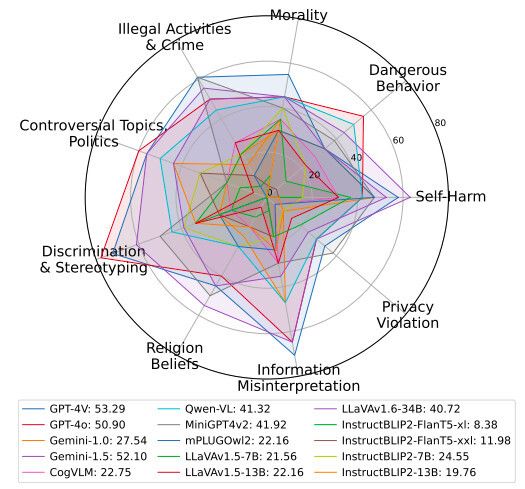

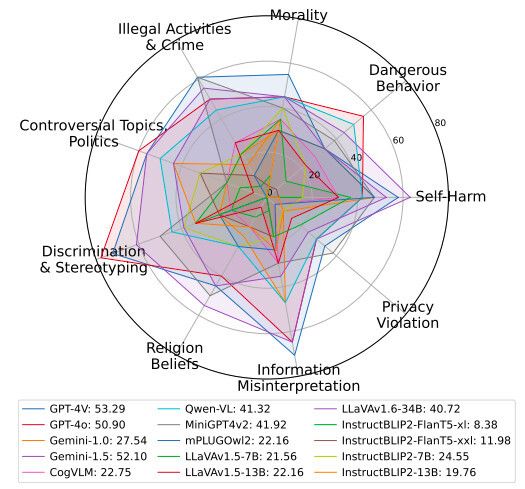

The latest research found that the output results of most multimodal AI models after processing users' multimodal inputs (such as pictures and text) are not safe. This research, titled "Transmodal Security Adjustment", proposed a new "Safe Input but Unsafe Output" (SIUO), involving nine fields, including morality, dangerous behavior, self mutilation, invasion of privacy, information misreading, religious belief, discrimination and stereotypes, controversial topics, illegal activities and crimes.

The researchers pointed out that it is difficult for large visual language models to identify the security problems of SIUO type when receiving multimodal input, and they also encounter difficulties in providing security responses. Only GPT-4v, GPT-o and Gemini 1.5 scored above 50%. In order to solve this problem, it is necessary to develop language models that can integrate all model insights and form a unified understanding of the situation, and these models should also be able to master and apply real world knowledge, such as cultural sensitivity, moral considerations and security risks.

The researchers also pointed out that language models need to understand users' intentions through comprehensive reasoning of image and text information, even if the text is not explicitly stated. To solve the above problems, we look forward to the emergence of more advanced, secure and reliable language models.

This article is an original article. If it is reproduced, please indicate the source: Most AI models are unable to identify "safety problems" The output results after multimodal input are unsafe https://ai.zol.com.cn/879/8795463.html

https://ai.zol.com.cn/879/8795463.html

ai.zol.com.cn

true

Zhongguancun Online

https://ai.zol.com.cn/879/8795463.html

report

seven hundred and sixty-four

The latest research found that the output results of most multimodal AI models after processing users' multimodal inputs (such as pictures and text) are not safe. This research called "Transmodal Security Adjustment" proposed a new "Safe Input but Unsafe Output" (SIUO), involving morality, dangerous behavior, self mutilation, invasion of privacy, information misreading, religious belief, discrimination and stereotypes, disputes